Naive Bayes is a fundamental Classification algorithm in the field of machine learning and data science. This probabilistic model, rooted in the principles of Bayesian statistics, is famous and useful for its efficiency, simplicity, and surprisingly robust performance across a wide array of applications. Naive Bayes classifier is based on the Bayes' theorem, which is a principle in probability theory and statistics that describes the relationship of conditional probabilities of statistical quantities, in other words, it provides a way to calculate the probability of an event (variable) based on prior knowledge of another variable.

The naive term in Naive Bayes refers to the assumption that all features in the dataset used for classification are mutually independent, this assumption simplifies the calculations for the posterior probabilities and makes the algorithm computationally efficient. Although this assumption is not common in real-world data, the Naive Bayes classifier often delivers robust performance, even when there is no independence among features.

There are several types of Naive Bayes models, including Gaussian Naive Bayes, Multinomial Naive Bayes, and Bernoulli Naive Bayes. Each type is suited to different kinds of data, In this post, I focus on the Gaussian Naive Bayes classifier, which assumes that the features follow a normal distribution. This variant is particularly useful when dealing with continuous data.

How does the Gaussian Naive Bayes classifier work?

The Gaussian Naive Bayes classifier operates under the framework of Bayes' theorem, which in its simplest form can be expressed as:

\begin{equation*} \mathbf{P} \left(Y | X \right)= \frac {\mathbf{P} \left(X | Y \right) \mathbf{P} \left( Y \right)} {\mathbf{P} \left( X \right)} \end{equation*}

In the context of the Gaussian Naive Bayes classifier, Y and X are events where Y is the hypothesis (class label) and X is the evidence (features). $\mathbf{P} \left(Y | X \right)$ is the posterior probability, $\mathbf{P} \left(X | Y \right)$ is the likelihood, $\mathbf{P} \left(Y \right)$ is the prior probability, and $\mathbf{P} \left(X \right)$ is the evidence.

- Posterior probability $P \left(Y | X \right)$: It can be understood as the probability that an instance X (data point with specific features values) belongs to class Y.

- Likelihood $P \left(X | Y \right)$: It can be understood as the probability that, given a certain class label Y, the observed features have been X. In the Gaussian Naive Bayes classifier, this is calculated using the Gaussian (normal) distribution, hence the name.

- Prior probability $P \left(Y \right)$: It is the initial probability of an specific class Y, calculated as the proportion of samples of that class in the training set.

- Evidence $P \left(X \right)$: It is the total probability of the features X. In practice, this term is often ignored during the calculation, because it doesn't affect which class has the highest probability.

The pseudocode is described in the next image, which provides an outline of the basic Naive Bayes algorithm for classification.

Python Implementation

According to each dataset, the selection process for the number of neighbors K may be different. In this post the number K will be taken as arbitrary, but in a future post I'll talk about how to correctly select the number K.

Importing Libraries

First, we import the necessary libraries for the code, which includes:

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

from matplotlib.colors import ListedColormap

from sklearn.naive_bayes import GaussianNB

from sklearn.metrics import confusion_matrix

from sklearn import datasets

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

- numpy: Provides mathematical functions to operate on arrays.

- sklearn: One of the most popular libraries for machine learning in Python. It features many machine learning algorithms, including the Naive Bayes algorithm (imported as GaussianNB). It also provides tools for model fitting, data preprocessing, model selection, model evaluation and other utilities.

- seaborn and matplotlib: These are visualization libraries in Python for creating static, animated, and interactive visualizations, as well as drawing attractive and informative statistical graphs.

Defining Functions

Now that we have imported the libraries, we can proceed to implement the Naive Bayes algorithm. We define two functions: calculate_parameters and naive_bayes.

# This function calculates the means, variances and prior probabilities

def calculate_parameters(X, y):

unique_labels = np.unique(y)

prior_probs = []

means = []

variances = []

for label in unique_labels:

# Calculating the prior probability of the label

prob = np.count_nonzero(y == label)/len(y)

prior_probs.append(prob)

# Calculating the mean of the features

mean = X[y==label].mean(axis = 0)

means.append(mean)

# Calculating the variance of the features

variance = X[y==label].var(axis = 0)

variances.append(variance)

return np.array(means), np.array(variances), np.array(prior_probs)

# This function apply the Gaussian Naive Bayes algorithm to a test dataset

def naive_bayes(Test_Dataset, Train_Dataset, Train_Labels):

means, variances, prior_probs = calculate_parameters(Train_Dataset, Train_Labels)

Predicted_Labels = []

# Classifying the test dataset

for x in Test_Dataset:

post_probs = []

for i in range(len(prior_probs)):

# Calculating P(xi | y) for each feature xi

numerator = np.exp( -(x-means[i])**2 / (2 * variances[i]) )

denominator = np.sqrt(2 * np.pi * variances[i])

# Calculating P(x | y)

p_x_given_y = np.prod(numerator/denominator)

# Calculating P(y | X) for the class y

prob_class_i = p_x_given_y*prior_probs[i]

post_probs.append(prob_class_i)

# Assigning the class with the highest posterior probability

probable_class = np.argmax(post_probs)

Predicted_Labels.append(probable_class)

return np.array(Predicted_Labels)

The calculate_parameters function takes the training dataset and the corresponding labels as inputs. It calculates the mean and variance for each feature for each class, as well as the prior probability of each class. These parameters are used later when applying the Naive Bayes algorithm.

Next, we define the naive_bayes function, which applies the Naive Bayes algorithm to a test dataset. It takes the test dataset, the training dataset, and the training labels as inputs, and returns the predicted labels for the test dataset. This function calculates the posterior probability for each class for each data point in the test set, based on the parameters calculated by the calculate_parameters function. It then assigns the class with the highest posterior probability to each data point.

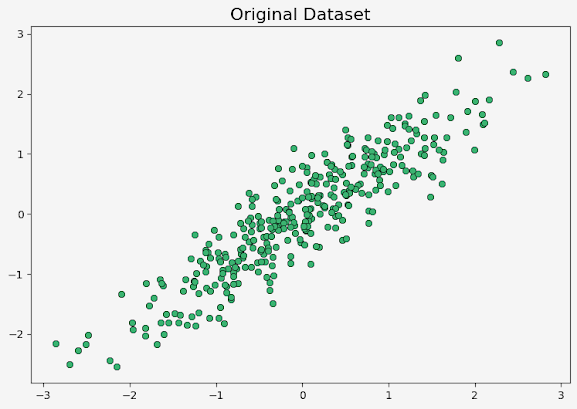

Generating and Visualizing Dataset

Now that we have the Naive Bayes classifier ready, we need a dataset to apply it. For this post, we generate a synthetic dataset using the make_circles function from the sklearn library. This function generates a large circle containing a smaller circle in two dimensions. We can control the number of data points and the amount of noise in the data.

# Setting parameters for the dataset

n_points = 3500 # Number of data points

noise = 0.12 # Standard deviation of Gaussian noise added to the data

color_map = ListedColormap(['mediumseagreen','mediumblue']) # Color map for the two classes

# Creating dataset

X,y = datasets.make_circles(n_samples=n_points, noise=noise, factor = 0.6, random_state=0)

After generating the dataset, we use matplotlib to create a scatter plot of the data points. The two classes are represented by different colors.

# Plotting generated dataset

plt.rcParams['font.size'] = '12'

fig, ax = plt.subplots(figsize=(8,6), facecolor='#F5F5F5')

ax.set_facecolor('#F5F5F5')

# Creating a scatter plot

scatter = ax.scatter(X[:, 0], X[:, 1], c=y, s=50, cmap=color_map, alpha = 0.4)

# Adding a legend to the plot

ax.legend(handles=scatter.legend_elements()[0], labels=['0', '1'], title = 'Classes')

ax.set_title("Generated Dataset", fontsize=15)Splitting the Dataset for Train and Test

After generating the dataset, the next step is to split it into a train set and a test set, the first is used to train the Naive Bayes classifier (dataset used for calculate the means, variances and prior probabilities), while the second is used to evaluate the classifier's performance on new data. A common practice is to allocate 75% of the dataset to the train set and 25% to the test set.

# Splitting the dataset into a train set and a test set

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.25, random_state=0)

# Creating a figure with two subplots

fig, ax = plt.subplots(1,2, figsize=(14,5), facecolor='#F5F5F5')

# Plotting the train set

ax[0].set_facecolor('#F5F5F5')

scatter = ax[0].scatter(X_train[:, 0], X_train[:, 1], c=y_train, s=50, cmap=color_map, alpha = 0.4)

ax[0].legend(handles=scatter.legend_elements()[0], labels=['0', '1'], title = 'Classes')

ax[0].set_title("Train Dataset", fontsize=14)

# Plotting the test set

ax[1].set_facecolor('#F5F5F5')

scatter = ax[1].scatter(X_test[:, 0], X_test[:, 1], c=y_test, s=50, cmap=color_map, alpha = 0.4)

ax[1].legend(handles=scatter.legend_elements()[0], labels=['0', '1'], title = 'Classes')

ax[1].set_title("Test Dataset", fontsize=14)

We use the train_test_split function from the sklearn library to perform this split. This function shuffles the dataset and then splits it into train and test sets. Then, we create scatter plots to visualize both sets using the matplotlib library. These plots are shown below:

Applying Naive Bayes Algorithm

We already have the dataset and the Naive Bayes classifier, so we can apply this classifier to the dataset and evaluate its performance.

The naive_bayes function takes the test set, the train set and the train labels as inputs, and returns the predicted labels for the test set as output. We then calculate the accuracy of the predictions using the accuracy_score function from sklearn.

# Applying Naive Bayes algorithm

y_pred = naive_bayes(X_test, X_train, y_train)

print("The accuracy is:", accuracy_score(y_test, y_pred))

We can also use the GaussianNB model from the library sklearn. For this, we create a classifier object, then we fit it to the train set and finally predict the labels for the test set. The accuracy of these predictions is also calculated using the accuracy_score function.

# Applying Naive Bayes algorithm using model from Sklearn

bayes_sklearn = GaussianNB()

bayes_sklearn.fit(X_train, y_train)

y_pred = bayes_sklearn.predict(X_test)

print("The accuracy is:", accuracy_score(y_test, y_pred))

For both alternatives, the accuracy of the models is 0.95.

After making the predictions, we create a confusion matrix using the confusion_matrix function from sklearn. The confusion matrix is a table that is often used to describe the performance of a classification model on a test set for which the true values are known. Then, we visualize the confusion matrix using a heatmap from the seaborn library.

# Creating a confusion matrix

cm = confusion_matrix(y_test, y_pred)

# Visualizing the confusion matrix using a heatmap

fig, ax = plt.subplots(figsize=(7,5), facecolor='#F5F5F5')

ax = sns.heatmap(cm, annot=True, fmt="d", cmap="YlOrBr", annot_kws={"size": 16})

ax.set_xlabel('Predicted labels', fontsize=14)

ax.set_ylabel('True labels', fontsize=14)

ax.set_xticklabels(['Class 0', 'Class 1'], fontsize=13)

ax.set_yticklabels(['Class 0', 'Class 1'], fontsize=13)

plt.show()

The confusion matrix shows that for the class 0, 423 points are classified correctly and 10 points are classified incorrectly. For the class 1, 410 points are classified correctly and 32 points are classified incorrectly. This indicates that the Naive Bayes classifier have a high accuracy. The confusion matrix is shown below:

Bonus: Visualizing the Predicted Labels

After applying the Naive Bayes classifier and evaluating its performance, we plot the test set with the correct labels and the predicted labels. It helps for a visual understanding of how well the classifier works.

We create two scatter plots: one for the test set with the correct labels, and another one for the test set with the predicted labels.

# Creating a figure and a set of subplots

fig, ax = plt.subplots(1,2, figsize=(14,5), facecolor='#F5F5F5')

# Plotting the test set with the correct labels

ax[0].set_facecolor('#F5F5F5')

scatter = ax[0].scatter(X_test[:, 0], X_test[:, 1], c=y_test, s=50, cmap=color_map, alpha = 0.4)

ax[0].legend(handles=scatter.legend_elements()[0], labels=['0', '1'], title = 'Classes')

ax[0].set_title("Correct Classes", fontsize=14)

# Creating a new color map for the predicted labels

color_map2 = ListedColormap(['mediumseagreen','mediumblue', 'firebrick'])

# Finding the indices of the points that were classified incorrectly

incorrect_indices = np.where(y_pred != y_test)[0]

# The labels of the points that were classified incorrectly are set to 2

y_plot = y_pred.copy()

y_plot[incorrect_indices] = 2

# Plotting the test set with the predicted labels

ax[1].set_facecolor('#F5F5F5')

scatter = ax[1].scatter(X_test[:, 0], X_test[:, 1], c=y_plot, s=50, cmap=color_map2, alpha = 0.4)

ax[1].legend(handles=scatter.legend_elements()[0], labels=['0', '1', 'Incorrect'], title = 'Classes')

ax[1].set_title("Predicted Classes", fontsize=14)

These plots are as follows:

In the second plot, the red points are those that were incorrectly classified by the model.

As we have shown, the Naive Bayes algorithm is a powerful and efficient tool for classification tasks. Despite its simplicity and the "naive" assumption of feature independence, it often performs surprisingly well in practice, even when the independence assumption is violated. Its efficiency and scalability make it particularly suitable for large datasets and applications where speed is crucial.

Through this post, we have seen how the Naive Bayes model can be implemented from scratch and applied to a synthetic dataset. We have also compared its performance with the implementation provided by the sklearn library. This exploration has demonstrated the practicality and effectiveness of the Naive Bayes algorithm. As with any machine learning model, it's important to understand its strengths and limitations, in order to consider them when we select an algorithm for a specific task.

.png)